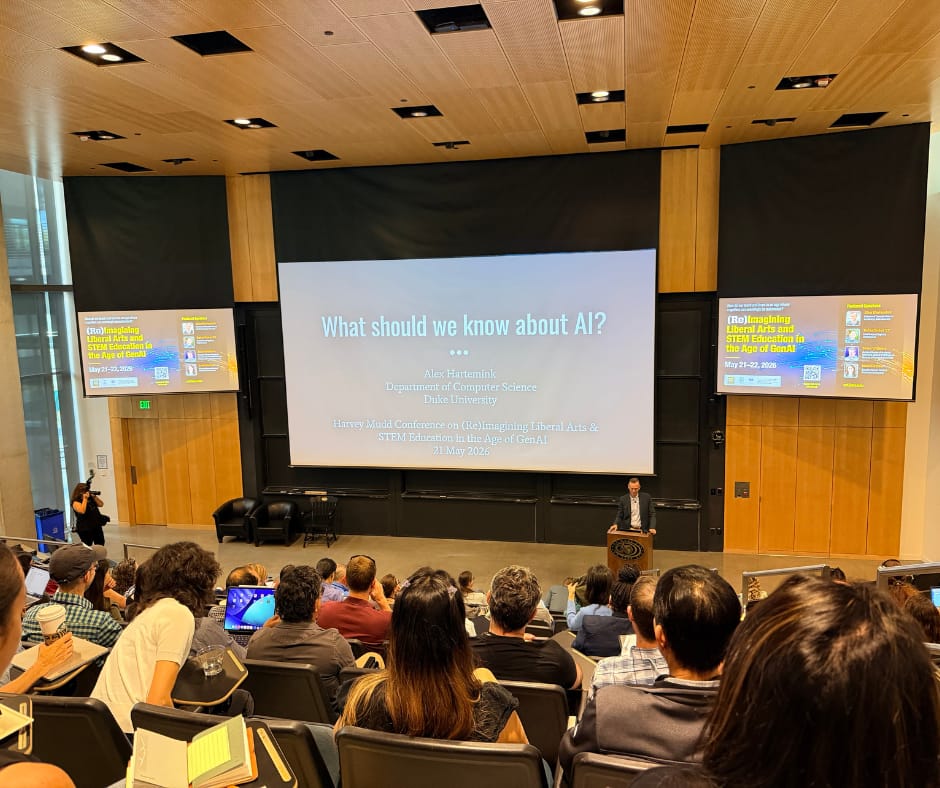

I had the privilege of attending the (Re)Imagining Liberal Arts & STEM Education in the Age of GenAI conference at Harvey Mudd College on May 21st and 22nd of 2026.

The first keynote was Alex Hartemink, Professor of Computer Science (and Biology) at Duke University. He titled his keynote What should we know about AI? A lot of people have been talking about AI fluencies and AI awareness lately, and I was intrigued from the start to see what his talk would center on.

Some Common Questions

Alex focused on a set of common questions that, he posited, would help us establish common ground. He asked things like, “Is AI intelligent, or does it only seem to be intelligent?” “How is AI made today and who makes it?” And one of his last questions was:

Why is AI suddenly everywhere, everything, all at once?

When a slide showing screenshots from a bunch of relatable movies came up, I heard lots of murmurs from the audience. I looked at the image and recognized C-3PO, a familiar figure from my childhood, alongside HAL 9000, TRON bent down in a smoky blue background, Data from Star Trek, WALL-E holding a Rubik's cube, and a movie I had just seen the night before.

The lower right-hand corner is from a movie called Her. I had not seen it before that week, but I had played a clip of it in a number of talks I have given, accompanying the “Go Somewhere” AI metaphor card game. I had finally decided to bump it up in my movie-watching queue because some geeky podcasters that Dave and I both subscribe to did a member special on the movie. I did not want any spoilers, but I was very much looking forward to watching it on the drive home from the conference.

Another image worth a comment is WALL-E. What a memorable movie. I can see why Alex included it in his collection, at least in moviemaking, of our desire to make intelligent beings in our own image.

Enthusiastic Ups and Downs

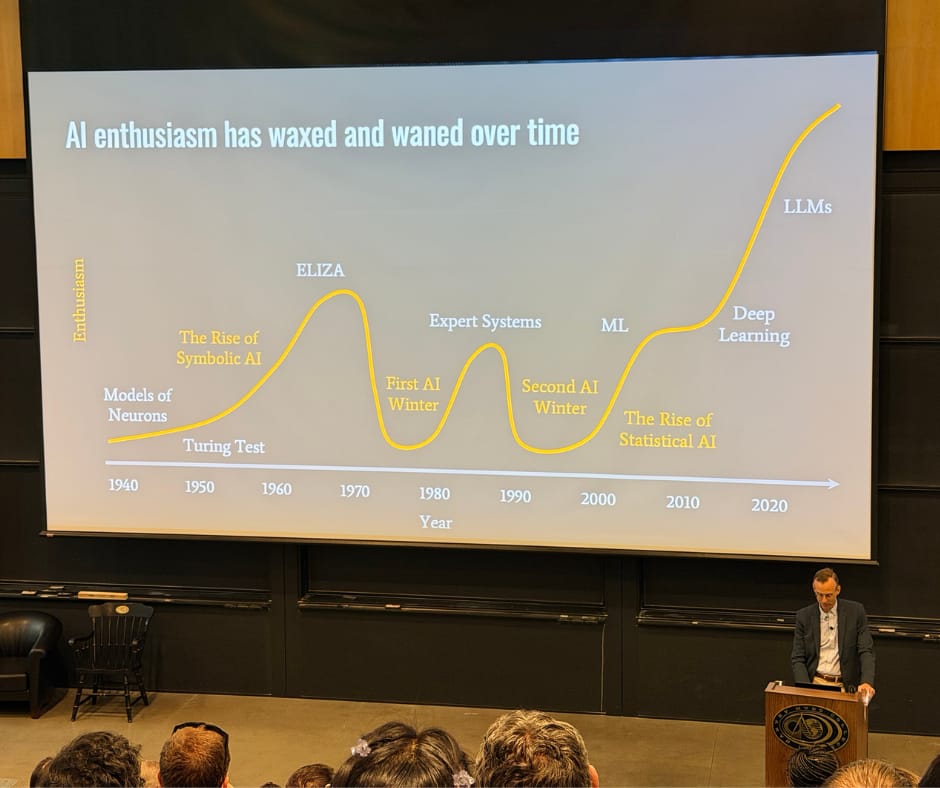

Alex then showed a timeline. He joked that he was charting enthusiasm and admitted, with a self-deprecating tone, that the exercise was less than scientific. His point was clear, though. Artificial intelligence has been around a long time, and there have been many waves.

We started in the 1940s with models of neurons, moved into the 1950s with symbolic AI and the Turing test, saw an upsurge in the 1960s with ELIZA, and then hit the first AI winter in the 1970s. Expert systems rose in the 1980s. The second AI winter came in the 1990s. Machine learning followed, statistical AI plateaued in the 2000s and early 2010s, deep learning came along, and then in the 2020s and beyond, large language models.

Many of us in the room seemed to know about ELIZA, the early attempt to turn a computer program into a therapist. Alex showed how interest waned after that, all the way to a big surge around deep learning. After 2020, the chart climbs sharply on interest in large language models.

Alex did a lot of definitions of terms, and it was nice for me since a lot of them were familiar. What his talk did was help center me on where we find ourselves today in relation to the past.

Algorithms, Models, and Products

Alex explained that we need to be able to distinguish between AI algorithms, AI models, and AI products. That information was not entirely new to me. It did get me more curious about when people say they are against using AI entirely, wanting to ask them more about what they mean by that, to see if we are sharing a common understanding of these various concepts.

How Stochastic Parrots Produce Human-Sounding Output

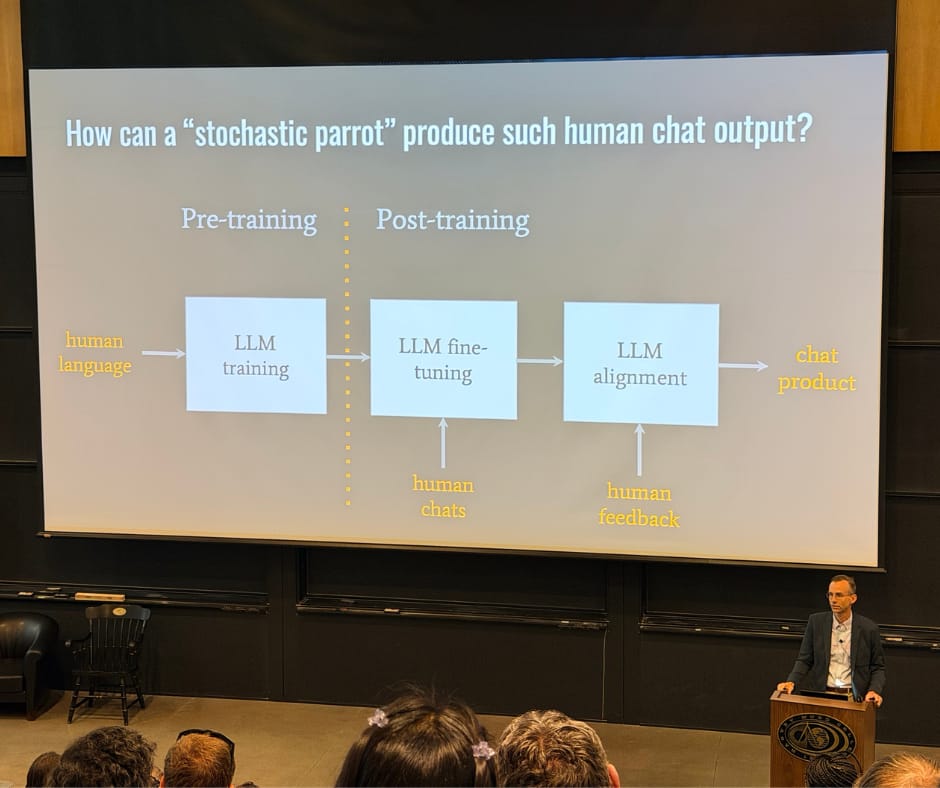

Alex gave examples of the different ways that AI gets referred to when we try to describe how it works or does not work. One example came from Emily Bender and her co-authors and their now-well-known stochastic parrots paper. The metaphor asks how a random parrot, telling us back what it hears, can produce such human chat output.

The diagram Alex shared helped illustrate for me a piece I want to remember, for when I'm attempting to describe how AI works. After models have been trained, the output of LLMs is shaped by more than just human chats. That is the large language model fine-tuning that happens after pre-training. There is also large language model alignment, and that is where human feedback comes in.

Two Large Misconceptions

Alex wanted to be sure to address two large misconceptions.

The first, and he said probably the largest, is that when we receive output from an AI tool, we assume that the AI means what it says. I am quoting from his slide here (in describing what it isn't actually doing, despite us thinking that's what's happening):

It's guided by meaning, purpose, truth, knowledge or intention.

The second misconception had to do with the future of AI being inevitable. Alex wanted to remind us that we have a lot of power to shape and imagine a future for artificial intelligence and how it should look. That is particularly true in the context of higher education.

Education and Imagination

Alex reminded us that the roots of the word education come from “to lead out or draw forth,” and in Latin, ex plus ducere. Lead out of what, and draw forth into what? The second word he broke down was imagination. To imagine is to “represent or form an image,” from the root word imago, in the mind. What kinds of minds are necessary to preserve our imagination?

Alex closed his talk with a couple of questions, and I will close this post with them as well.

On imagination, he asked:

What kinds of minds are necessary to preserve our imagination?

And on formation: people are formed. How? By whom? And for what end?

Who forms a person's intellect, imagination, character, will, desires?

I'll be sharing more about the conference in the coming weeks, but want to close this post by thanking the conference planning team for a wonderful event. It was a rough time to be traveling, even if I only drove 1.5 hours to get there. I'm still glad I took the time to be there, though, given how much I learned through the experience.